SIDKIT

Text-to-hardware system that turns prompts into synth-game consoles by teaching an AI agent quality and intricacies of interaction, sound, and design — through human-verified benchmarks. It learns from every mistake using recursive error learning, getting cheaper and faster with each build.

The Thesis

Everyone will be vibe coding. SIDKIT extends it into the physical world — a living, reconfigurable instrument designed for agent collaboration. Users move from Text → Code → Firmware → Sound without leaving the browser.

The Challenge

How to build AI native hardware from ground up allowing agents to code games, synthesis modules and sequencers with just voice or text prompt. There is no device that allows that atm. What is the optimal interface for no-coders or beginners? How to learn with AI? How to teach articulating complex tasks and features.

Solution: SIDKIT thanks to its component and design systems allows flashing firmware directly from prompts. It previews interactive devices before committing flashes using WASM. WASM version mirrors hardware — hear it before flashing. Digital twin ensures what you hear is what you get. That allows faster iteration and abstracts coding away from the users. Errors and solutions are saved in the database allowing agents to find common problems.

The Category: Text to Hardware

Same Teensy, different device. Via prompt it becomes:

- •A wavetable synthesiser

- •A Pac-Man melody generator

- •A 4-track step sequencer

- •A hybrid engine (physical modelling + SID chip emulation)

- •A Zelda-style adventure with procedural audio

You're not generating firmware — you're generating devices.

Triple-Agent Architecture

| Agent | Function | LLM | Stack |

|---|---|---|---|

| Builder | C++ code generation, error fixing, build loop | Frontier LLM | Rust Agent + Toolchain |

| Reviewer | Fresh-context code review, SDK compliance | Fast LLM | Adversarial Check |

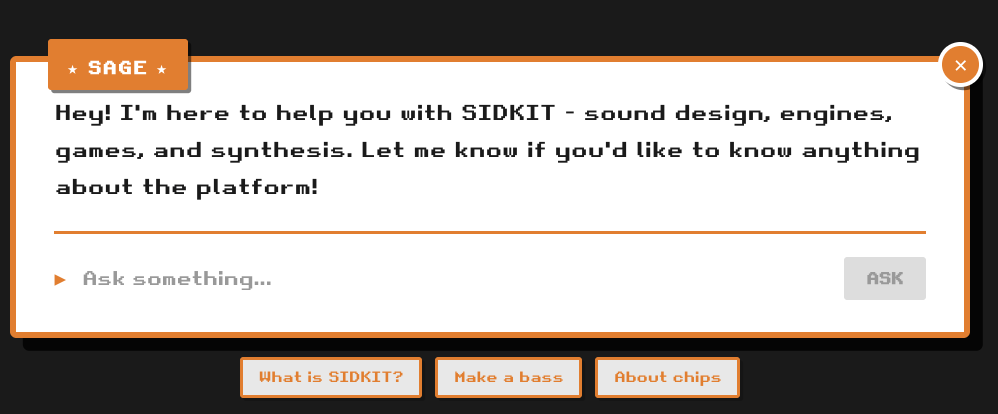

| SAGE | Real-time sound design, SysEx generation (standalone) | Fast LLM | MCP Tools |

System Flow

Pipeline

Text

Natural Language

Code

C++ Generated

Firmware

Compiled Binary

Hardware

Teensy 4.1

Design Decisions

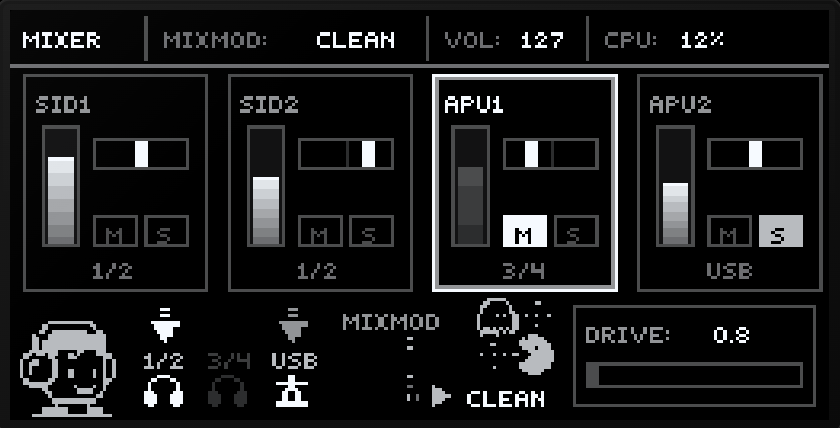

- —Multi-Engine Architecture: 5 emulation engines (ESFM, APU, ReSID, Amiga, YM2612) swappable in real-time — sequences can switch chip emulations mid-melody, covering classic retro consoles and DOS sound chips.

- —Semantic Knowledge Base: 150+ documents covering chips, synthesis methods, games, and SDK patterns — searchable via embeddings.

- —MCP Tools (SAGE): 9 specialised tools for sound design, knowledge retrieval, and real-time synth control.

- —Dual Design Systems: Two parallel component libraries — firmware OLED (C++/U8g2) and WebUI (React/TypeScript).

- —Schema-Driven Architecture: 12 structured schemas (parameters, SysEx, presets, engines, UI components) — single source of truth for firmware ↔ WebUI sync.

- —R&D Pipeline: 40+ prototypes hand-tested and benchmarked — iterative development from concept to hardware.

Hardware Platform

| MCU | Teensy 4.1 (ARM Cortex-M7 @ 600MHz) |

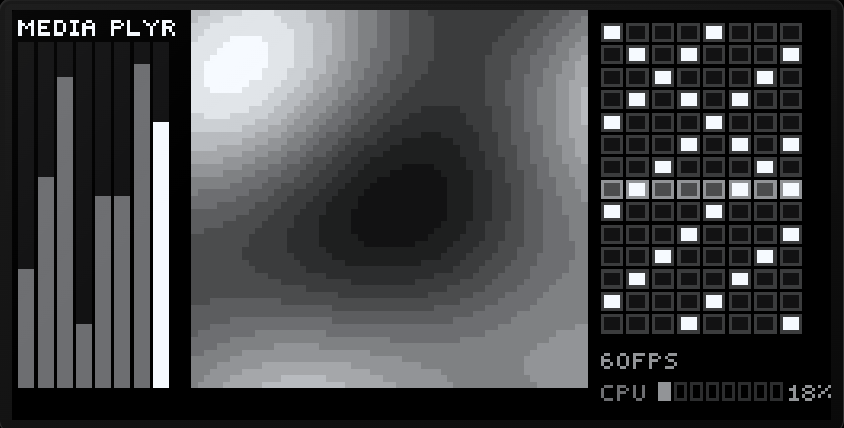

| Display | 256x128 4-bit greyscale OLED |

| Controls | 8 encoders + 24 buttons |

| Audio | 2x stereo + USB Audio (48kHz) |

| Architecture | 4 engine slots, 1x sequencer slot 1x game slot, any sound engine in any slot |

Games as Sequencers

Games aren't just sound effects — they're melody generators. Every action triggers musical events that can be recorded and looped.

Pac-Man meets Orca. Player navigates maze, falling notes hit character, creating melodies. Gameplay becomes composition.

Lemmings-inspired rhythm pathfinder. AI creatures walk toward exits that trigger drums. Manipulate obstacles to create rhythmic variations.

Probability-driven step sequencer with sprite animations. Raindrops fall with weighted randomness — each hit triggers notes. Visual patterns become generative compositions. Sprite animations are triggered by selected tracks for engagement and visual impact.

Game of Life meets step sequencer. Cellular automata and L-systems grid evolves over time, modulating playback speed and pitch across selected waveforms on active steps. Users can lock parameters per step and change primitive AI algorithms in real-time to affect modulation.

What I Learned So Far

More flexible display.

I began with 128x64 mono OLED — it works, and keeping it expands SIDKIT into a lower-cost tier for the community. But if starting fresh, I'd go straight to 256x128 4-bit greyscale from day one. It removes an entire abstraction layer and visually allows for detailed shaders and sprite animations, even porting old games for brave nerds and hackers.

Less is more.

The original design had three separate cloud agents coordinating over HTTP. It worked but added complexity for no real gain. Consolidating to one local agent making two API calls — build and review — made it simpler, cheaper, and far easier to debug. More tokens saved, more time for experimenting, less waiting for agents to finish.

Taste and Aesthetics Research.

You can't just tell an AI to make something sound good. I'm investigating teaching agents taste by creating a volume of quality examples — UX patterns, design decisions, sound intricacies, where distortion sounds pleasant, where interaction breaks down. 15 years of playing synths and designing visuals going into human-verified benchmarks. It's ongoing research but important — should cut the number of iterations significantly.

Agent-to-Hardware Protocol (A2HW)

- 1.Preview Layer — WASM synth mirrors hardware

- 2.SysEx Control — Real-time changes without reflashing

- 3.Recursive Learning — Every error collected, every fix fed back

- 4.Digital Twin — WebUI and hardware UI stay in sync

- 5.Taste Layer — Teaches the agent quality and intricacies of interaction, sound, and design system

SIDKIT isn't just a multi-engine synth with sequencers and games, which is cool in its own right. It's an agent-powered platform for generating devices.

Links

Stack

Next.js 15 • React • TypeScript • WASM (reSID) • Rust • Axum • C++ • PlatformIO • MCP Server • Cloudflare Workers/D1/Vectorize